Be the brand the model already knows. By name.

We engineer the brand presence — across Wikipedia, structured data, news, GitHub, and the rest of the open web — that makes GPT, Claude, Gemini, Perplexity, and Copilot name you when users ask about your category. Long game, big compounding payoff.

What is Large Language Model Optimization?

LLMO is the work of building enough of the right kind of brand presence across the open web that large language models name your brand when they answer category-relevant questions. Unlike GEO (which targets engines that cite live sources), LLMO targets what the model itself has internalized from its training data.

It's a long game — training-data updates roll into LLMs every 6–12 months — but the payoff compounds. Once a model has learned your brand as the default answer for a category-level question, every user across that model's install base hears your name without you paying for the impression.

GEO gets you cited live (engine fetches your page). LLMO gets you cited from memory (the model has already learned your brand).

LLMO vs GEO vs AEO

Three optimization surfaces, three timelines, three measurement frames. Most engagements run two of the three together.

What you get with us

The deliverables — written down, so the scope is the scope.

- 01

Prompt mining for your category

Find the questions LLMs are getting about your space. We sample across models to map the landscape of what users are asking and what models are answering.

- 02

Brand-mention baseline

Measure how each model currently mentions you — frequency, accuracy, sentiment. The before-picture you'll iterate against.

- 03

Entity & E-E-A-T work

Wikidata/Wikipedia presence (where eligible), Organization/Person schema, author bios with expertise signals — the structured data LLMs use to recognize your brand as an entity.

- 04

Distributed presence strategy

A plan to land brand mentions across the high-trust surfaces models train on — Wikipedia, news, structured Q&A, GitHub, datasets, industry publications.

- 05

Citation-friendly content production

Long-form factual content that LLMs lift cleanly — clear claims, named entities, source citations of our own. Same craft as GEO content, written for both surfaces.

- 06

LLM monitoring dashboard

Weekly automated probes across GPT, Claude, Gemini, Perplexity, and Copilot tracking your brand-mention frequency, sentiment, and citation share over time.

How we run an LLMO engagement

Five stages run as a 6-month minimum retainer — LLM training cycles are slow, so we plan for compounding wins, not quick spikes.

- 01

Prompt mining

We sample 50–150 prompts across the major LLMs to map what users in your category are actually asking — and what each model is currently answering. The output is a heatmap of category-level prompt coverage and a ranked list of 'questions worth being the answer to'.

- 02

Brand-mention baseline

We run a structured probe against GPT, Claude, Gemini, Perplexity, and Copilot for every priority prompt. We log how often you're mentioned, with what accuracy, and with what sentiment. Competitors get the same treatment. This is the before-picture we iterate against monthly.

- 03

Entity & E-E-A-T work

Make your brand machine-parseable. That includes Wikidata/Wikipedia presence where eligibility allows, Organization and Person schema across your site, author bios with expertise signals, and explicit linking between brand entities (founder, products, locations). LLMs weight these heavily when consolidating their model of who you are.

- 04

Distributed presence

Coordinated execution across the surfaces LLMs train on — guest articles in industry publications, structured contributions to open Q&A communities, GitHub presence (where applicable), curated dataset / benchmark contributions. We don't spam; we land mentions in places that compound over multiple training cycles.

- 05

LLM monitoring

Weekly automated probes against each major model. The dashboard tracks brand-mention frequency, accuracy of factual claims, sentiment direction, and category-level citation share. We iterate monthly — doubling down on what's moving, replacing what isn't.

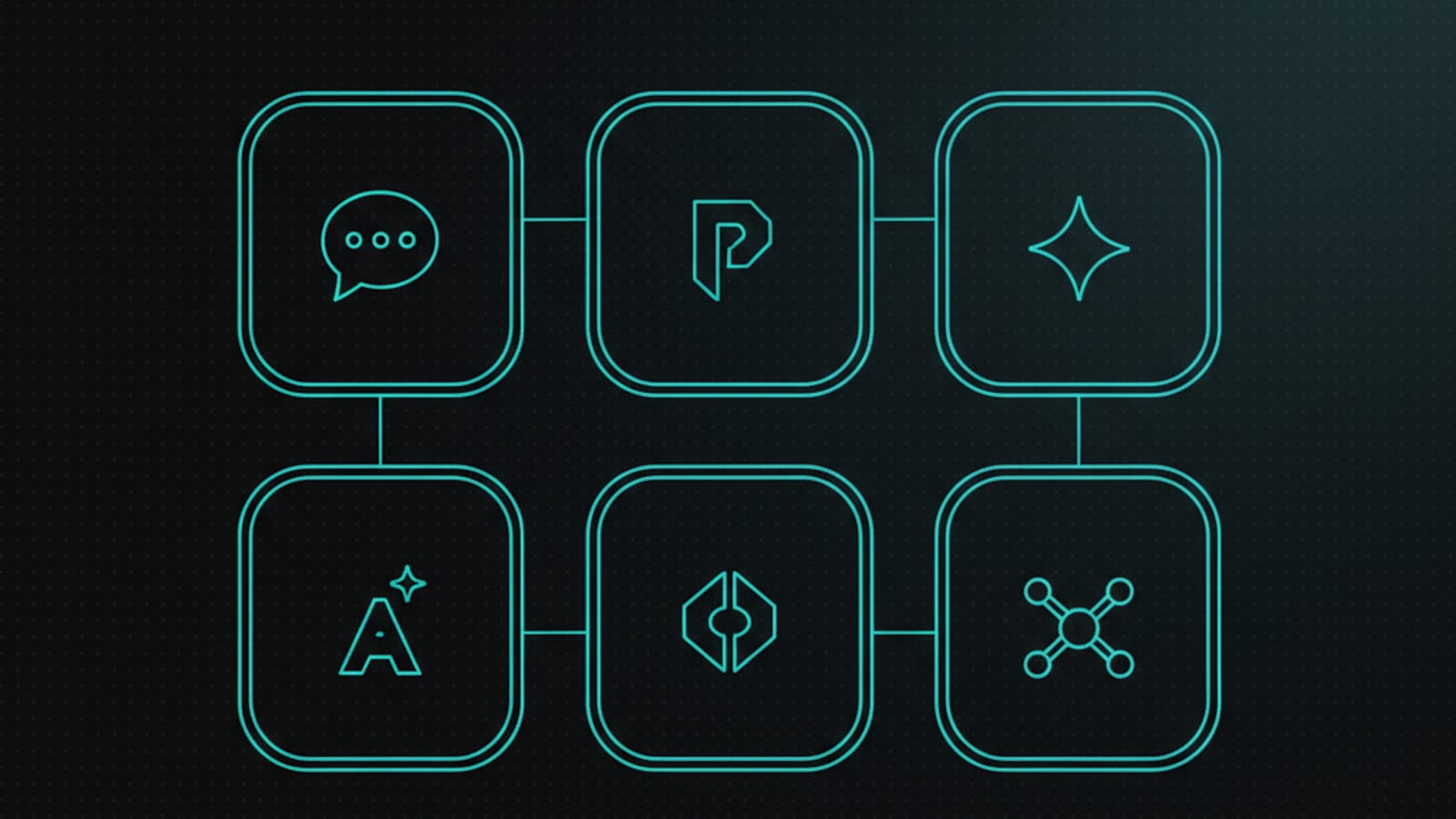

Models we optimize for

Six model families cover the bulk of LLM-influenced search and conversation today. Each rewards slightly different presence signals.

- GPT (OpenAI)Largest training corpus. High citation share for established brands.

- Claude (Anthropic)Conservative on facts. Rewards clean structured presence.

- Gemini (Google)Strong on web-grounded entities. Wikidata helps materially.

- PerplexityBrowsing-augmented. GEO + LLMO both move the needle here.

- Copilot (Microsoft)Bing + GPT blend. B2B and enterprise category strength.

- Open-source LLMsLlama, Mistral, etc. Public dataset presence is the unlock.

Frequently asked questions

The questions we actually get on scoping calls — answered honestly, not in marketing voice.

What is LLMO (Large Language Model Optimization)?

How is LLMO different from GEO and AEO?

How do LLMs decide which brands to cite?

How do you get an LLM to learn my brand?

How long does LLMO take to show results?

What does the LLMO process look like?

Is LLMO worth it if my budget is small?

Can you fix bad information an LLM has about my brand?

Ready to grow with a team that actually ships?

30-minute discovery call. No slides, no pitch, just your situation, where revenue should come from next, and an honest answer about whether web development, digital marketing, AI services, or all three are the right move.